Photogrammetry with COLMAP

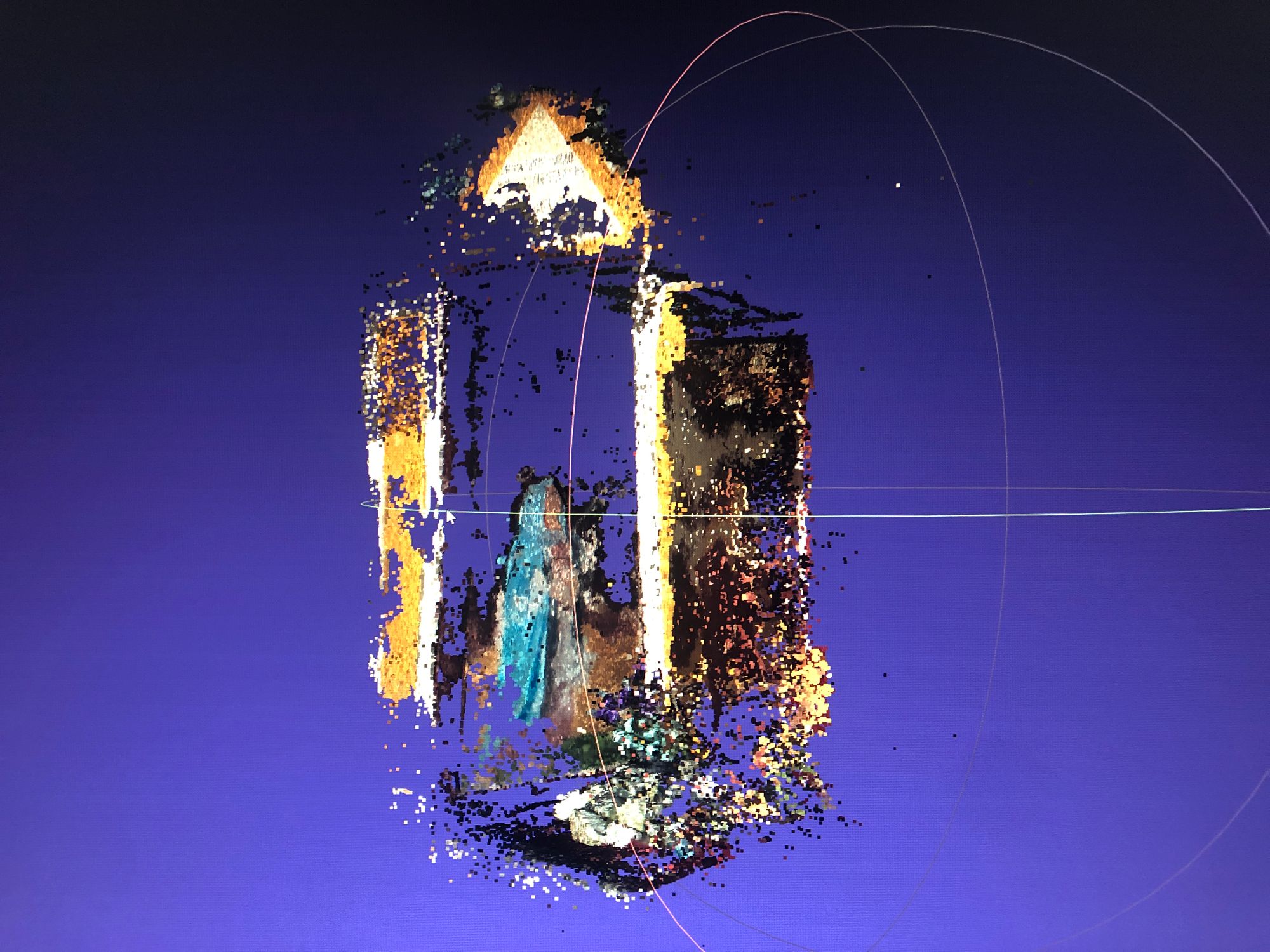

COLMAP can take a pile of images and reconstruct a 3D model out of it. It needs no additional information to do this and that's pretty damn cool. However, it's understandably slow. COLMAP is actually a pipeline of different operations which builds a database along the way. We can speed up this process a lot by providing "hints" about the scene in this database format which will allow the process to go a lot faster.

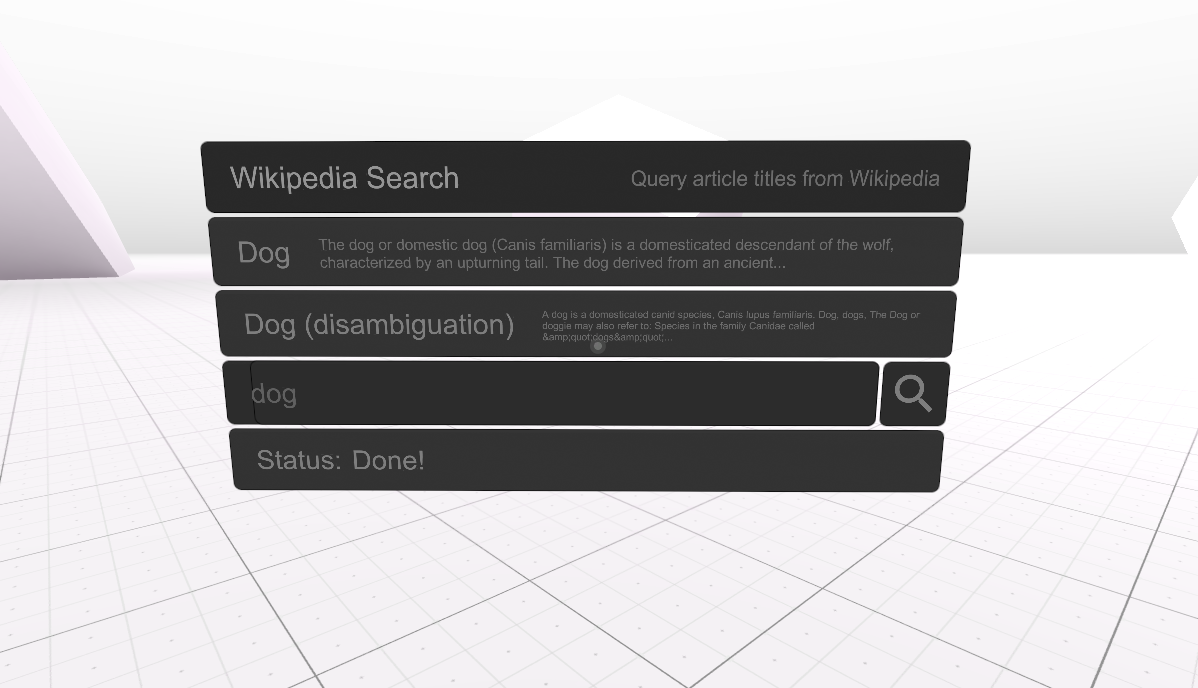

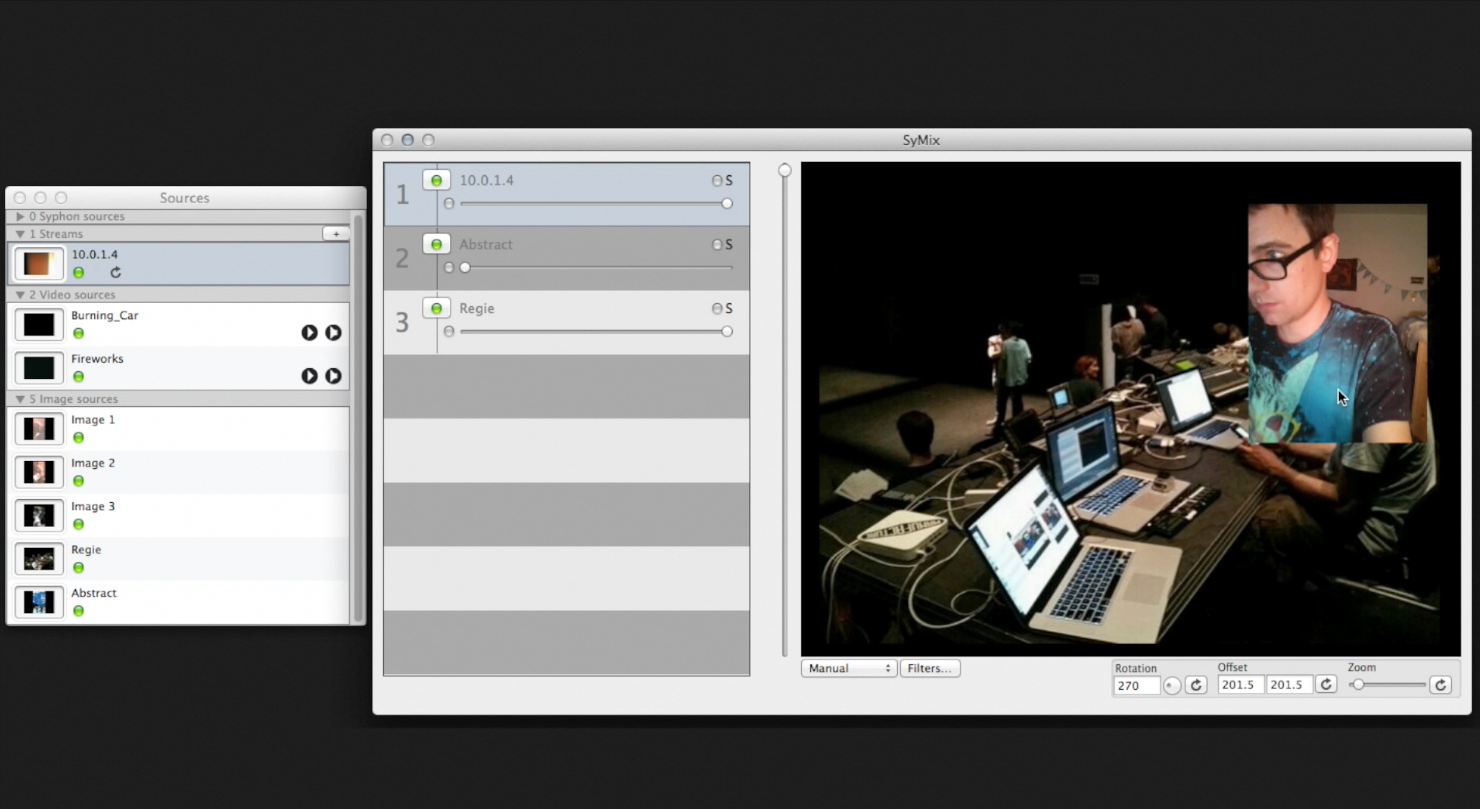

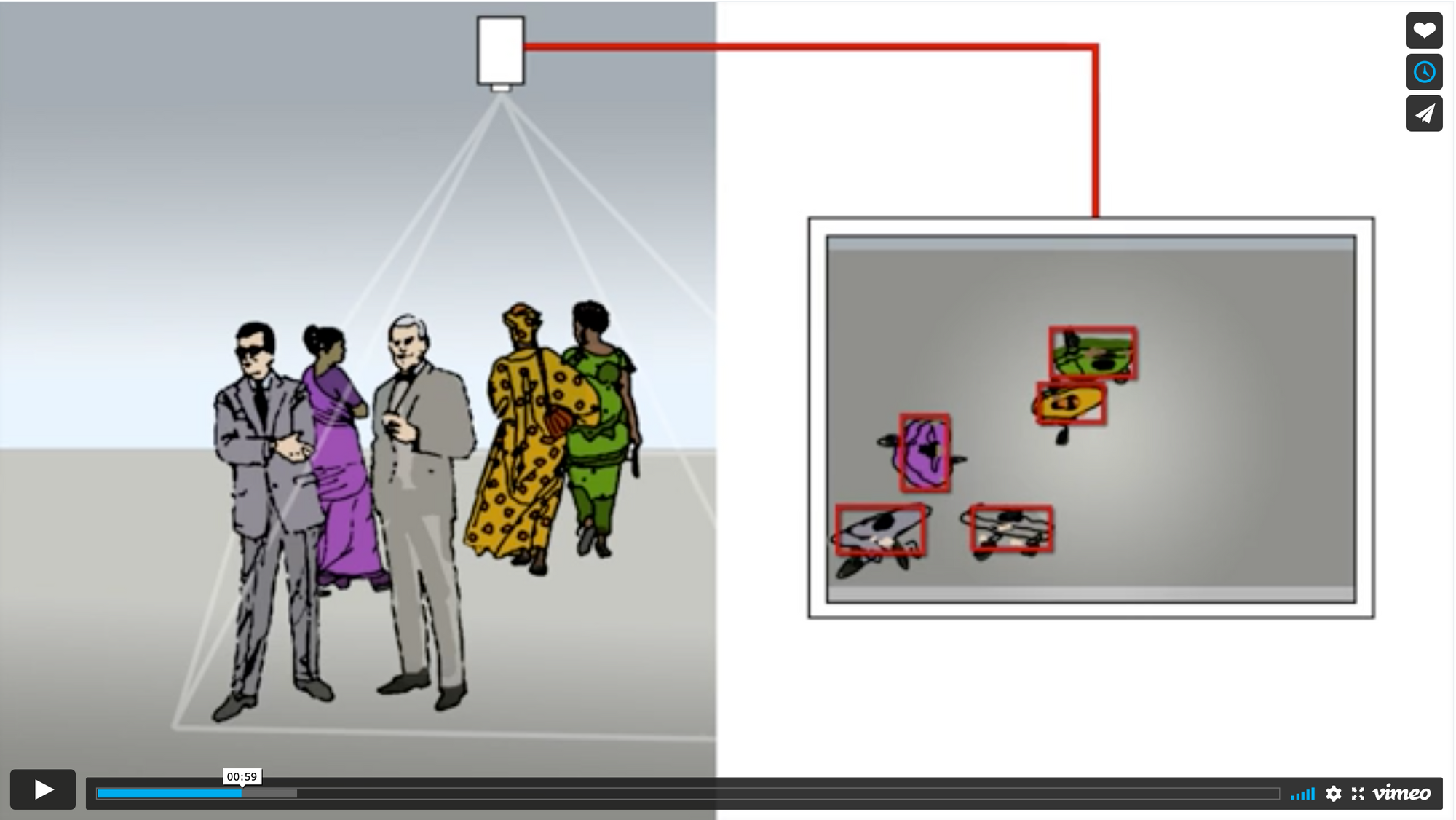

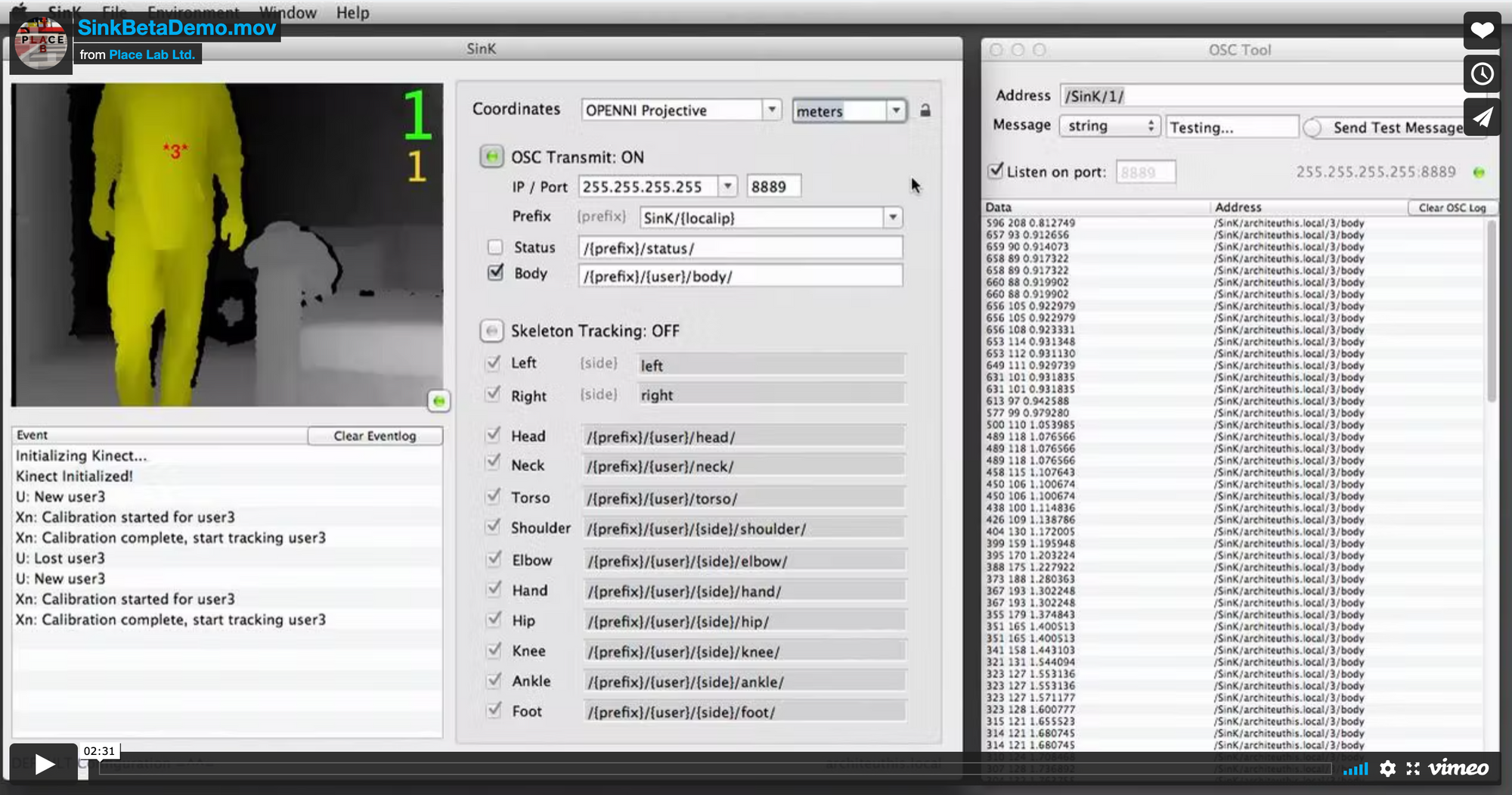

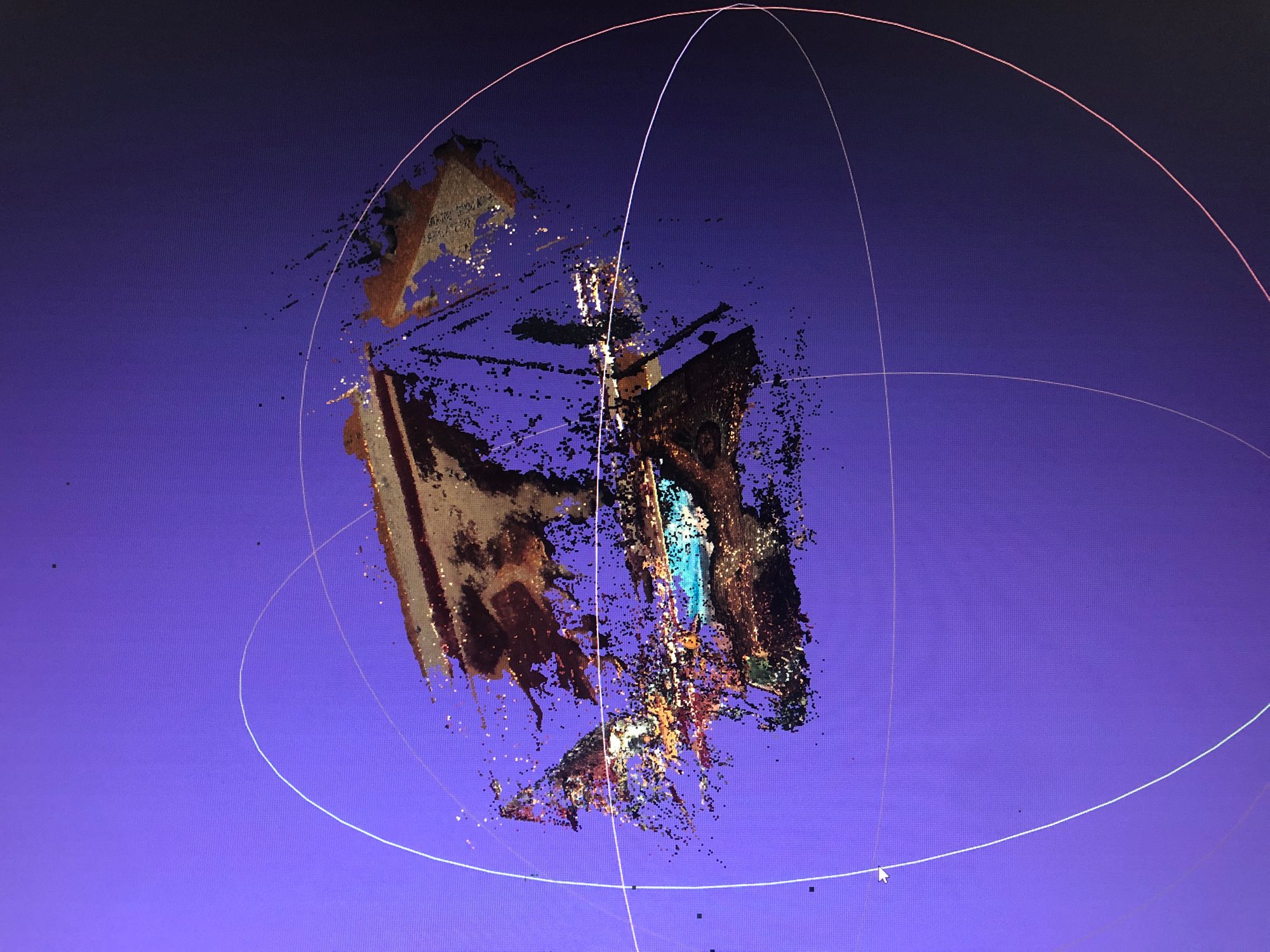

One option is to use a camera-equipped VR headset to record both location data and images at the same time. A nice demo of this was put together by Alexander Clarke:

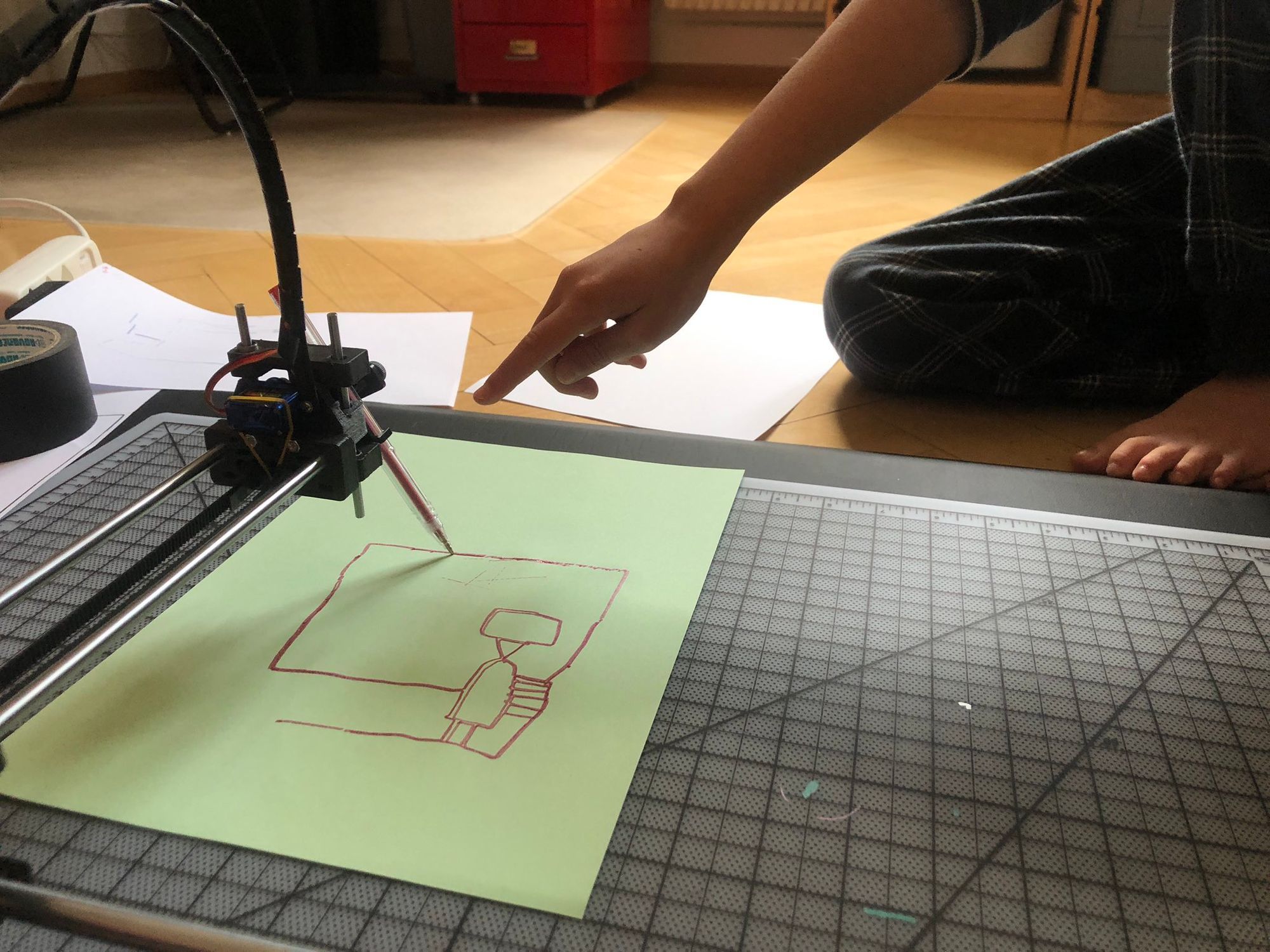

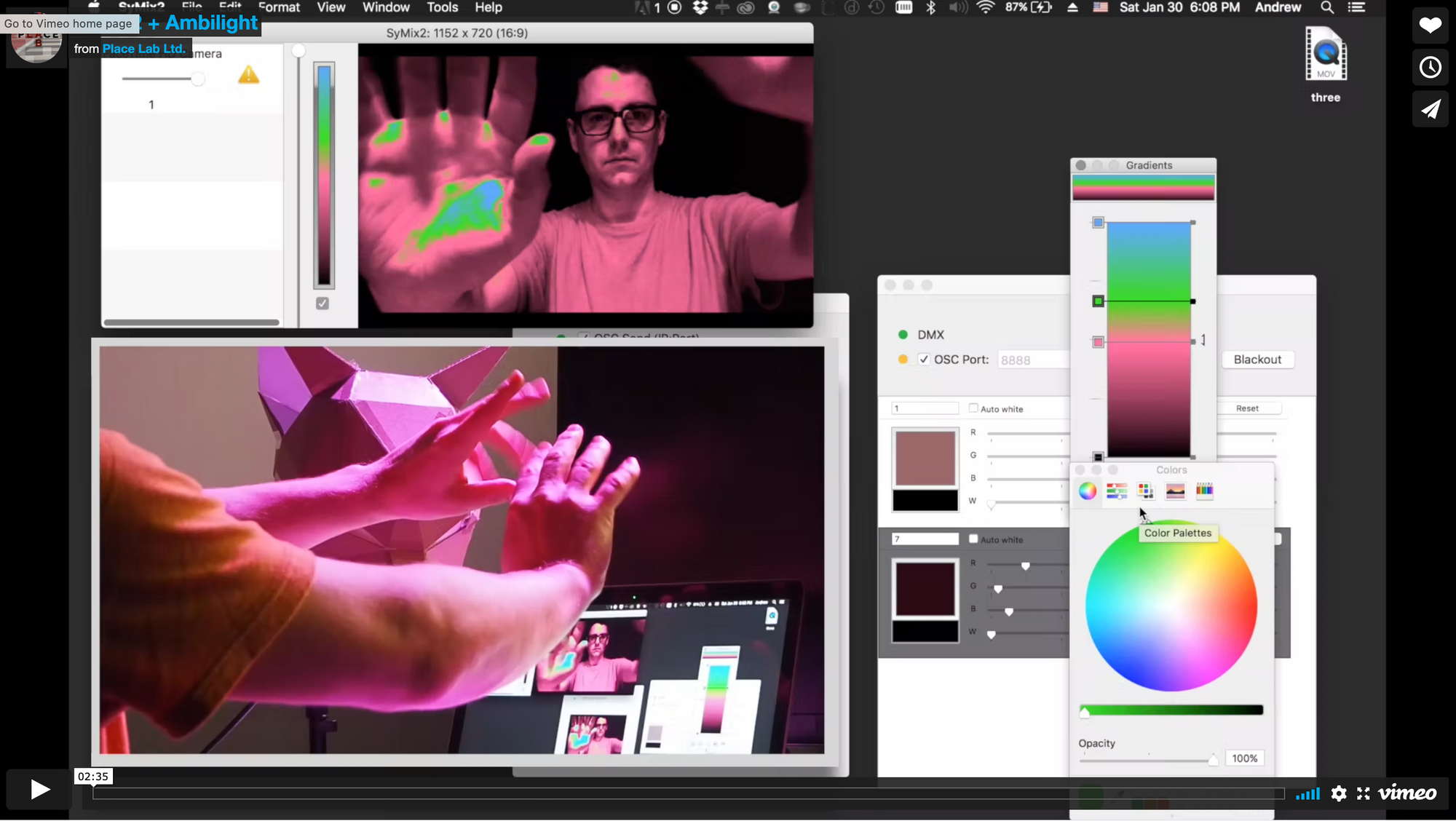

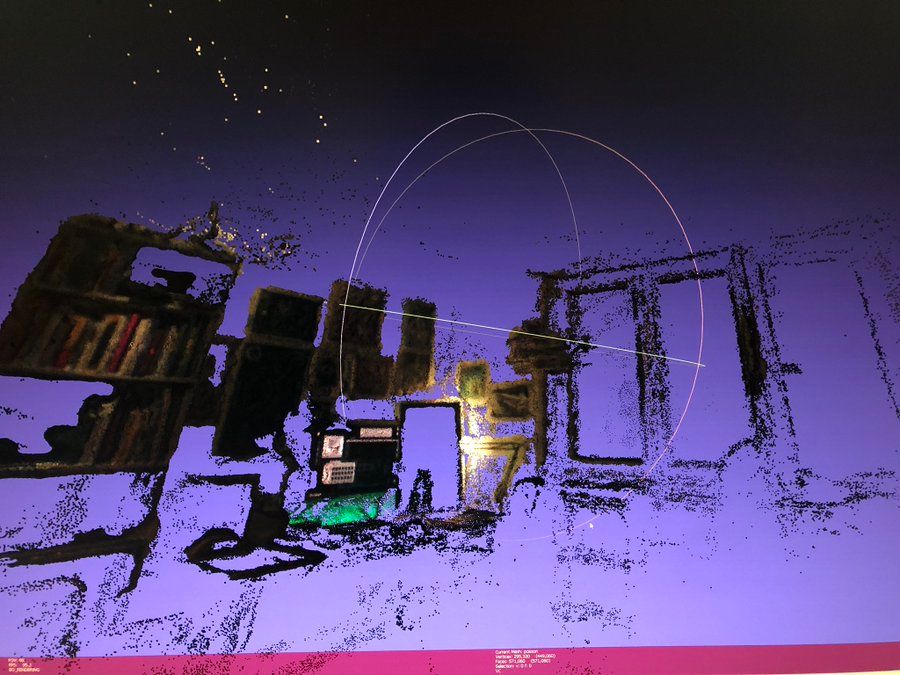

This is great and works well, but I didn't care for the quality of the images I could get out of my headset and I was curious to see if I could use my webcam or cellphone instead and just link that to tracker data.

This is a work in progress, I barely know what I'm doing and I'm just messing around but it's fun to play with. Right now I have scripts which:

- Use a webcam and a vive tracker puck (instead of the headset) to periodically record data

- Use timestamps from the previous scripts to extract and synchronize video frames, so you can run the logger script and film the room and build the db from that.

- Blur-rejection on these

I'm particularly interested in doing this with old fashioned 2D stereoscopic images, but it turns out COLMAP isn't the tool for this. I think possibly it might just be a task for OpenCV, or at least some of the robotic vision code from that era.

💜 Is this project interesting or helpful to you? Please share it!

If you're feeling generous I like coffee ☕